Stop Wasting Thousands on Vibe Coding: The Costly Disaster of Lazy AI and the Revolutionary 2026 Agentic Blueprint for Zero-Bug Code

“Stop Vibe Coding: The 2026 Agentic Blueprint for Zero-Bug AI”

Vibe coding—it’s when developers toss fuzzy prompts at an LLM and hope for the best. They keep poking it until something “feels right,” and then ship the result. Engineers call this “prompt and pray.” Companies call it Technical Debt 2.0, mostly because it quietly drains time and money. The real cost? Hours lost in debugging, emergency fixes, and late-night outages that never should’ve happened.

I’ve seen teams burn through upwards of $50K each quarter just by trusting this lazy shortcut. One fintech company I worked with spent three solid months rolling out a “simple” new feature, but it never settled down. The engineers were good. The problem was the process—vibe coding, no structure in sight.

Read More: What is vibe coding?

That all changes with the 2026 agentic blueprint. Instead of crossing your fingers and improvising, you follow a focused framework. Teams swap guessing for engineering discipline: live feedback, self-checking code, and errors caught before they cause problems. Reliable, zero-bug code becomes a base expectation.

This isn’t just buzz—agentic blueprint or engineering has to level up, and this is what the real pros are doing.

The Problem: Why LLM Coding Needs a Framework

LLMs are powerhouses at generating code, but without guardrails, they run wild. When there’s no agentic blueprint or Framework, you prompt, the model fabricates code, you adjust, it backslides, and the whole cycle repeats. Eventually, production breaks and you’re stuck firefighting for weeks.

Here’s why “prompt and hope” falls apart:

- No environment awareness. The model doesn’t see real-time logs, database states, or live errors.

- Single-threaded thinking. One agent—or one person—tries to handle everything, losing critical info on the way.

- Zero verification. Code launches with silent bugs that only show up when users are already in trouble.

This is how technical debt multiplies fast. Old codebases already hid landmines—now vibe coding just buries more on top. Maintenance costs spike by 30-50%. Release cycles double or triple. It’s a mess.

Numbers from 2025-2026 are rough. Teams stuck in this loop keep redoing work and falling behind. But the teams using agentic frameworks slash their bug rates and ship clean releases.

Let’s be honest: “Prompt and hope” isn’t engineering. It’s just gambling.

Solution: The 2026 Agentic Blueprint

Here’s where things turn around. With agentic blueprint or engineering, you treat your AI agents like disciplined coworkers, not magic word machines.

This isn’t theory—a three-step blueprint has already proved itself in the wild. You use a Model Context Protocol (MCP) for real-world visibility, orchestrate several agents in a clear workflow, and layer in constant checks.

Here’s how teams actually work with it:

Step 1: See Everything with Model Context Protocol (MCP)

No more prompting in the dark. Now agents plug directly into your live environment.

MCP is an open protocol—think of it as a secure, two-way channel between your AI and your actual software systems. Your agent can check error logs, databases, APIs, git histories, and live metrics in real-time. The agent knows your real codebase, not a pretend one.

Picture this: an agent asks, “What are the latest errors in the payment service?” MCP feeds it only what matters. The agent’s not overwhelmed, and there’s way less nonsense in its answers.

Right there, you wipe out about 40% of the usual vibe-coding failures.

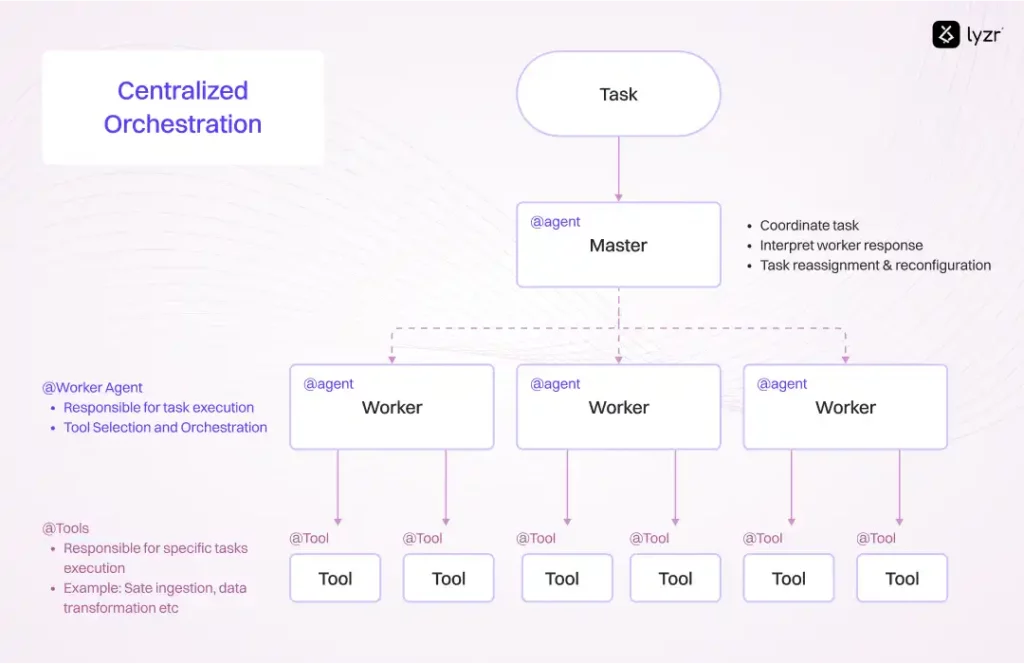

Step 2: Multi-Agent Orchestration—The Plan, Code, Test, Fix Loop

One agent is clever. But a squad, each with a role? Much more powerful.

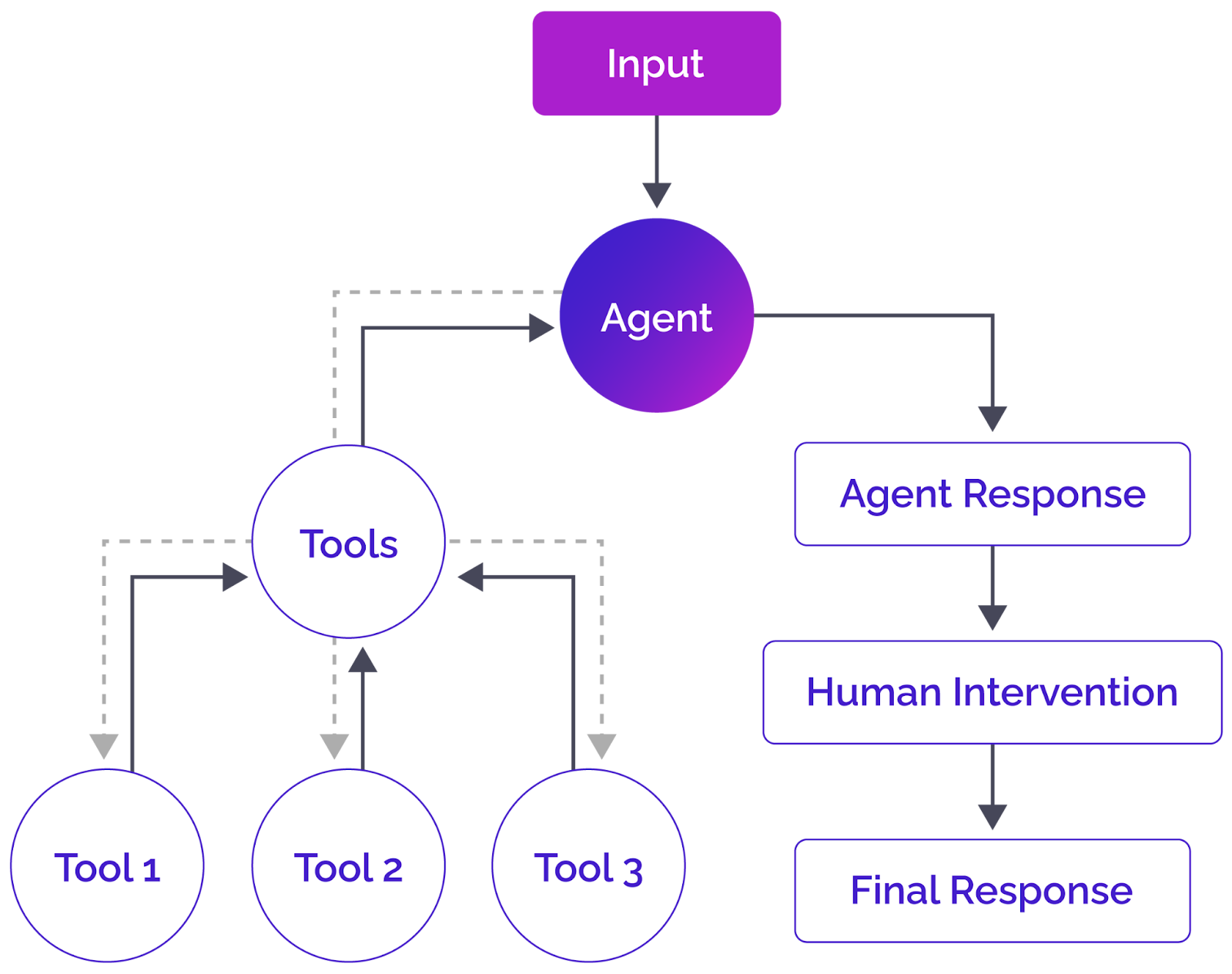

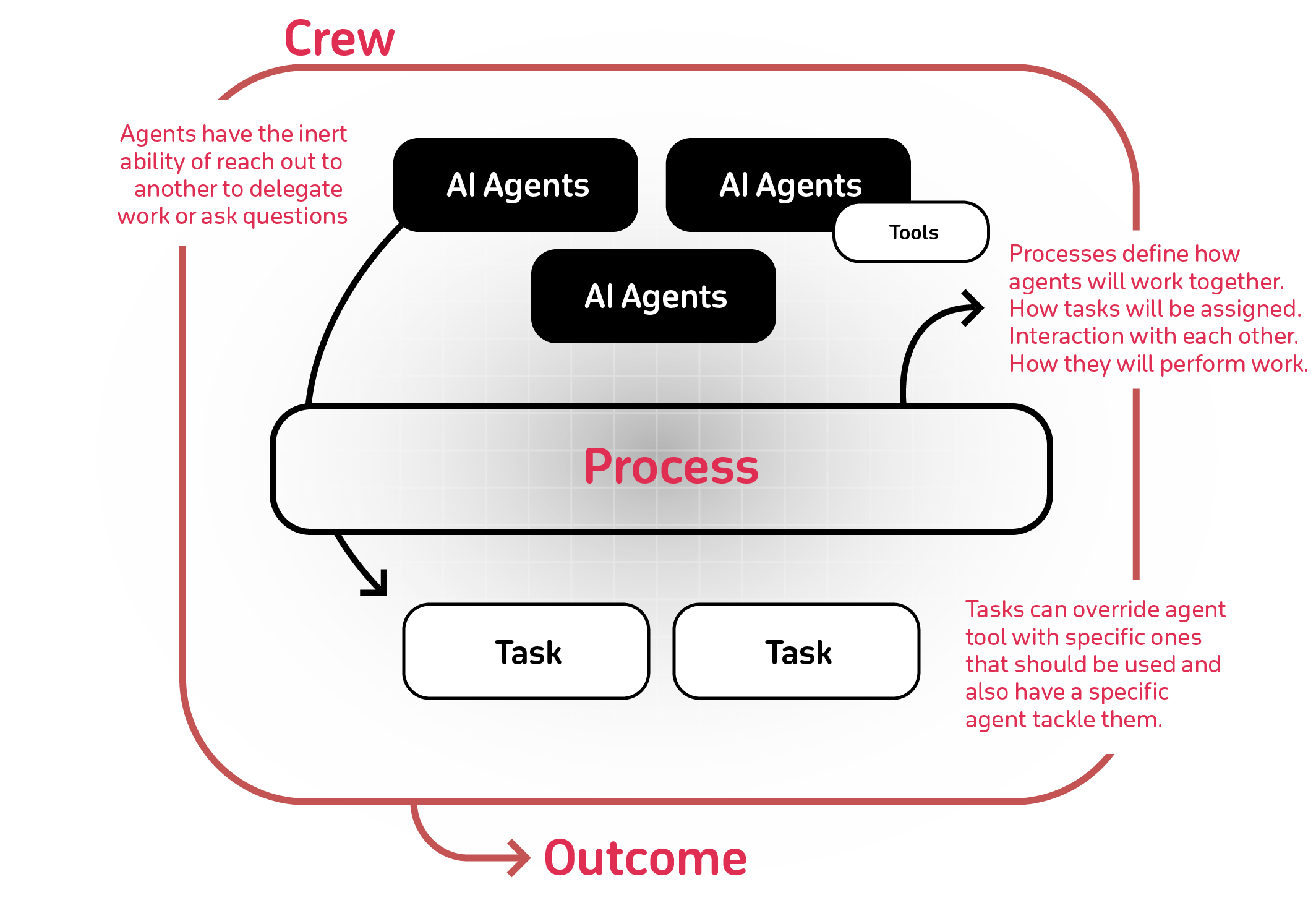

Here’s how it breaks down with frameworks like LangGraph or CrewAI:

- The planner agent splits your task into clear, bite-sized steps, each with an acceptance test.

- The coder agent writes the real code, pulling context through MCP.

- The tester agent checks the code—unit tests, integration, security, whatever’s needed.

- The fixer agent cycles through and patches any failures—automatically.

This loop runs until everything passes. Only then does a human even review the pull request.

Teams using this system aren’t guessing, and they aren’t living in crisis mode. They’re doing real engineering, with AI as a proper teammate.

This Plan → Code → Test → Fix cycle is the heart of the 2026 agentic blueprint. It turns weeks of back-and-forth into hours of reliable output.

Step 3: Verification Layers – Self-Correcting AI Agentic Workflows

The final safeguard: layered verification that runs before human review.

- Static analysis agents

- Runtime simulation agents

- Security and compliance agents

- Human-in-the-loop only for final sign-off

These AI Agentic workflows self-correct in real time. The result? Near-zero bugs reaching production.

The agentic blueprint doesn’t remove humans — it elevates them to architecture and strategy while the machines handle the grind.

Tool Comparison: Claude Code vs. Cursor vs. Enterprise Frameworks

2026’s Best coding AI leaders have matured into true agentic platforms. Here’s the honest breakdown:

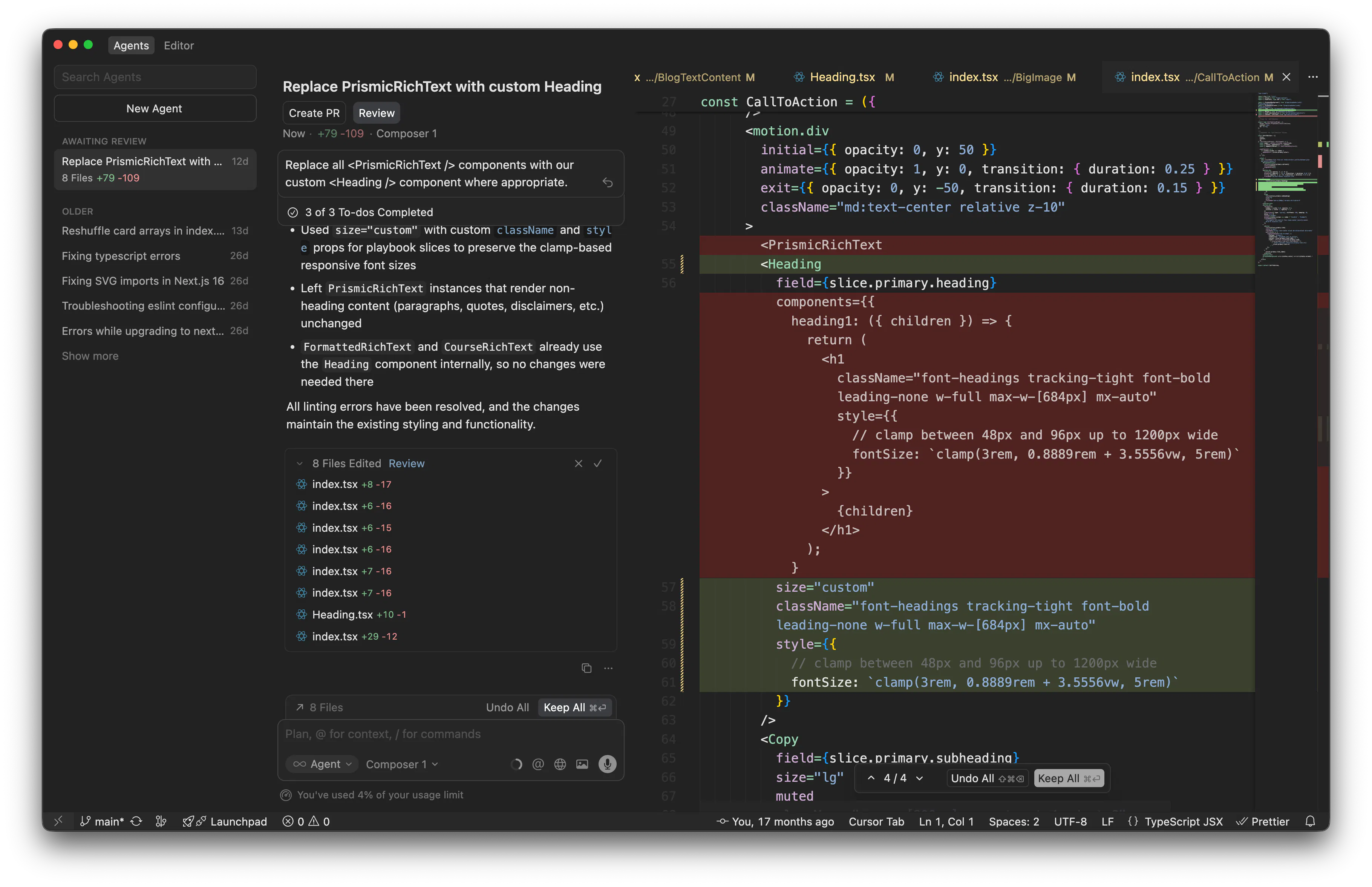

Cursor offers a top-notch coding environment. The autocomplete feels smooth, and the cloud agents really boost productivity—it’s fantastic if you want to move fast as an individual. But when you’re tackling big projects that stretch over weeks, Cursor doesn’t really handle autonomy like the fully agentic setups do. It still needs plenty of hands-on guidance from a human. So, if your team values speed and wants to work in something familiar, like a VS Code fork, Cursor’s the way to go.

Claude Code is different. Its reasoning abilities are on another level, and it integrates natively with MCP. You get genuine terminal and IDE autonomy, and developers say they rework code 30% less often. If you’re dealing with a complicated codebase—especially in 2026—nothing else comes close. It’s designed for enterprise teams that won’t compromise on quality or want AI that truly self-corrects. The catch? There’s a slightly steeper learning curve, especially if your team’s new to fully agentic workflows.

LangGraph and CrewAI aren’t coding AIs in themselves. They’re the frameworks behind the scenes: LangGraph gives you low-level, stateful orchestration, so reliability is never an issue. CrewAI is more about high-level teamwork—delegation and memory are built in. Combine them, and you get the orchestration layer that turns tools like Cursor or Claude Code into powerful, unstoppable systems. Think of them as the scaffolding holding everything together.

Read More: LangGraph vs CrewAI: Let’s Learn About the Differences

| Tool | Autonomy Level | MCP Native | Best For | Rework Reduction |

|---|---|---|---|---|

| Vibe Coding | None | No | Quick prototypes | 0% |

| Cursor | High | Via plugins | IDE-first teams | ~25% |

| Claude Code | Very High | Yes | Complex enterprise code | ~35% |

| LangGraph + CrewAI | Full Orchestration | Framework level | Production agentic systems | 45-50% |

The ROI: Why the Agentic Blueprint Is the Only Path to Zero-Bug Code in 2026

Here’s the deal: if you’re still clinging to unstructured “vibe coding” in 2026, you’re already behind. The agentic blueprint isn’t just a trendy fix — it’s become the basic requirement.

Teams who made the switch are seeing real results. Debugging takes less than half the time. Features roll out three to five times faster. Production incidents from code changes? Pretty much gone. Developers finally get to focus on creative work, not endless bug hunts.

Think about the money. If ten engineers are losing 15 hours a week to rework, you’re burning over $150K a year, just on salaries. With agentic workflows, that’s money you get back fast — sometimes in just weeks.

Zero-bug code isn’t some impossible goal anymore. With solid agentic engineering, it actually happens.

Conclusion:

It’s time to drop lazy, expensive coding habits. The agentic blueprint, built with Model Context Protocol, smart agent orchestration, and tough verification, is the only proven way forward for reliable, bug-free code.

Beeznez Tech doesn’t chase hype. We build for the long run. Start simple: weave MCP into one workflow. Layer on orchestration tools like LangGraph or CrewAI. Watch what happens — bugs start vanishing.

Adopt this blueprint now, and your team will set the pace in 2026 and beyond. Ignore it, and you’ll keep paying for hidden technical debt.

You decide. Stop wasting money. Start building real systems. The agentic blueprint is ready for you.

Frequently Asked Questions (FAQs)

1. So, what is “vibe coding,” and why do people call it a disaster?

Vibe coding is basically chucking vague prompts at an AI coding tool — like ChatGPT, Claude, or Gemini — and fiddling until the code “feels right.” There’s not much actual code review, structured testing, or thoughtful design. Andrej Karpathy came up with the term in early 2025. The whole vibe is, “forget the code even exists” — just prototype fast or mess around on a weekend project.

But for real production work or serious teams, vibe coding opens the door to hidden bugs, messy logic, mountains of technical debt, and expensive debugging. Teams end up redoing work 30–50% more often, break production all the time, and spend tens of thousands fixing problems that never should have happened. It’s fun for experiments, but a nightmare for anything important.

2. How’s the 2026 Agentic Blueprint different from just using Cursor or Claude Code?

The Agentic Blueprint isn’t just another tool — it’s a system. You get:

- Environment-awareness with the Model Context Protocol (MCP), which lets AI agents see logs, databases, and what’s happening right now.

- Multi-agent orchestration: different agents for planning, coding, testing, and fixing, running in loops.

- Layered verification: checking and fixing before a human even looks.

Cursor (a great IDE) and Claude Code (smart reasoning and MCP built in) are awesome tools, but solo, they still lean toward “AI assist.” Plug them into the Blueprint, and you get a workflow meant to stomp out bugs and deliver reliable code — so it’s not just “prompt and pray” anymore.

3. What’s Model Context Protocol (MCP), and why’s it a big deal in 2026?

Think of MCP like a “USB-C for AI.” It’s an open standard (originally Anthropic, now under Linux Foundation’s Agentic AI Foundation) that lets AI agents securely connect to files, databases, APIs, logs — all kinds of stuff. Instead of making things up, agents pull real, current info when they need it.

That means less hallucination, more accuracy, and real autonomy. By 2026, MCP is everywhere — powering everything from personal assistants to big coding agents, running on tens of thousands of community servers.

4. Which coding AI rules in 2026 — Cursor, Claude Code, Windsurf, or something else?

There’s no universal “best,” but here’s the rundown:

- Cursor: Polished IDE (a VS Code fork), shines for big codebases and speed. Most pros love it for daily work.

- Claude Code: Deep reasoning, complex changes, terminal autonomy, MCP built in. Use it when quality and self-fixes matter.

- Windsurf (used to be Codeium’s editor): Great free tier, decent agentic features, good for solo devs or teams on a budget.

- Others, like Copilot or Zed, are solid but not as advanced in multi-agent features.

If you want zero-bug code in production, don’t just use these solo. Pair them with orchestration frameworks for full power.

5. What are the top multi-agent orchestration frameworks for coding in 2026?

Here are some leading options:

- LangGraph (from LangChain): Handles complex workflows with graphs, conditional routing, shared state. Great for production and customization.

- CrewAI: Fast prototyping with role-based “crew” (Planner, Coder, Tester, etc.). Super easy for beginners, though not as flexible for deep tweaks.

- Others: OpenAI Agents SDK, Google ADK, Anthropic’s Agent SDK — all up-and-coming, each with strong ties to their own models.

LangGraph is your best bet for mission-critical projects. CrewAI is fastest for demos or quick prototypes. Use these with tools like Claude Code to build out the Agentic Blueprint.

6. Should we ever use vibe coding, or just stop it altogether?

Vibe coding is great for early ideas, fast prototypes, learning, or weekend hacks. It brings speed and creativity when failing costs nothing.

But for any serious work — enterprise, production, team projects — it’s dangerous. So, use vibe coding just to spark ideas, then quickly switch to structured Agentic workflows to build and ship. Don’t send unverified vibe code out into the world.

7. What’s the real ROI for teams moving to the Agentic Blueprint?

Teams using the Blueprint in early 2026 saw:

- 40–60% less time spent debugging or reworking code

- Features delivered 3–5 times faster

- Production incidents from bugs almost gone

- Developers spend time architecting and innovating, not just patching fires

If you’ve got a 10-person team wasting 15 hours each week on fixes, you’re looking at $100K–$200K in annual savings — sometimes paying for the new system in just a few weeks. The Blueprint isn’t just saving money — it actually multiplies productivity.

8. Is zero-bug code even possible in 2026?

Not literally perfect, but honestly? Teams using the full Agentic Blueprint basically don’t see bugs getting to production anymore. Self-correction, real-time context, good checks — these catch stuff before a merge happens. With humans still guiding architecture, the result is a measurable boost, not just marketing fluff.

9. Why keep pushing skepticism over hype, but optimism for agentic engineering?

AI is flooded with wild claims (“Every coder replaced tomorrow!”). The fallout from unchecked vibe coding is proof enough. But, if you look at MCP adoption, multi-agent maturity, and real-world data from 2025–2026, disciplined systems are moving the needle. Beeznez Tech drops the hype and gets straight to what actually works — agentic engineering that delivers, not just talks.